Without A/B testing -without experimentation- you have no concrete data to drive real growth.

What is A/B Testing and how does it work?

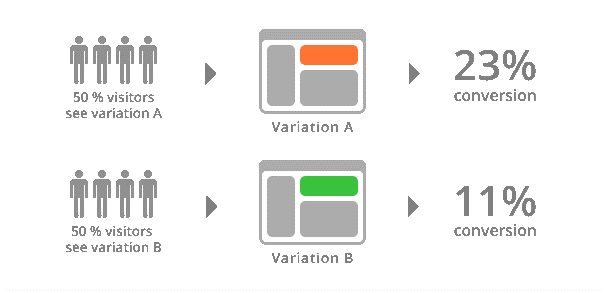

A/B tests are experiments in which two versions of something are compared against each other to see which performs better.

You can A/B test almost anything. Whether it’s an ad, a banner, a button, a landing page, a website, or an app – it can be A/B tested.

When audiences visit your site or app, they are randomly split so that a percentage of users are shown version A, while others are shown version B. Statistical analysis will reveal which version gets the best results.

The A/B method lets you test very specific changes to your website, then collect and analyse the data so that the impact of the changes can be measured and lead to data-driven optimization.

The changes can be as simple as a headline (colour, wording) or as extensive as a complete redesign of the page. Then, half of your traffic is shown the original version, while the second half is shown the modified version to see which one gets the results you’re looking for – revenue, signups, downloads – whatever you’re trying to do with your website.

Why Should You A/B Test?

A/B testing may sound simple but the insight gained can lead to significant measurable improvement of the user experience and performance. When it comes to making guesses or assumptions about human behaviour – specifically, how people will interact with your site or app, the results are often unexpected and can only be uncovered through actually testing.

After testing one variable at a time – the cumulative effect of winning changes leads to tremendous boost towards your desired outcome or goals – such as conversion rates.

In addition, the overall spend on marketing can actually be decreased as the elements of the user interface become as efficient and effective as possible – especially when it comes to getting new customers. A/B testing also proves useful to help product developers and designers to optimize other goals such as user engagement, and in-product user experience – to name a few.

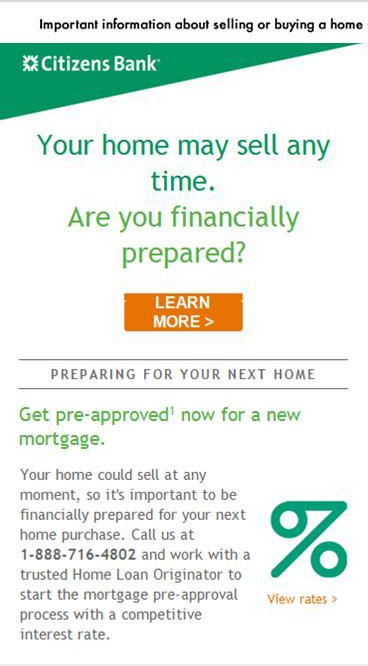

Here’s another example of why you should A/B test (for more examples, go to www.behave.org) – the Citizens Bank experiment:

Should your call to action stress urgency or important information?

Key Performance Indicator (KPI): Unique Open Rate (unique opens/net delivered)

Traffic Source: Existing banking customers. Traffic to site split 50/50 between version A and version B.

Goal: to determine which emotional language subject line will convert better – informative or urgent.

Difference between versions:

Version A: Urgent email subject line: “Act now – get help with selling or buying a home”

Version B: Informational email subject line : “Important information about selling or buying a home”

Which version do you think won?

The Winner: VERSION B!

Compared to version A – the informational copy increased open rates 49.2%, at 99% confidence.

Why? Perhaps because urgency in subject lines is a widely used marketing tactic – one that has lost its effectiveness due to overuse. This particular tactic is an old trick – marketers are simply trying to trigger our impulsive instincts and motivate us to purchase or engage in some other call to action.

(Source for Citizens Bank example: BEHAVE, https://www.behave.org/case-study/call-action-stress-urgency-important-information/)

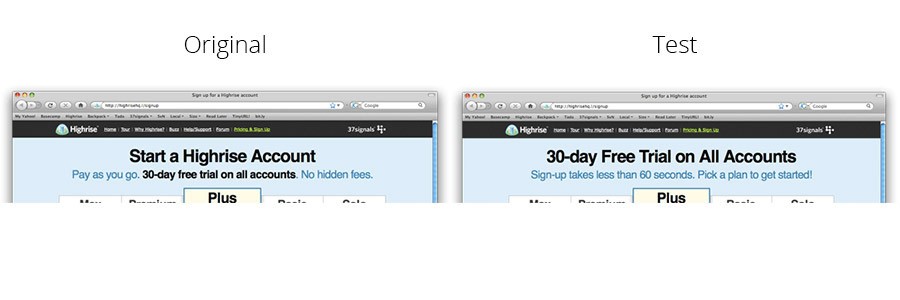

Here’s another example from Highrise, a CRM software company:

Highrise’s Headline & Subheadline Test:

Highrise tested different headline and sub headline combinations to see how it affected their sign-ups. The test showed that the variation telling visitors that the sign-up is quick produced a 30% increase in clicks. It was also the only variation with an exclamation mark.

Highrise tested different headline and sub headline combinations to see how it affected their sign-ups. The test showed that the variation telling visitors that the sign-up is quick produced a 30% increase in clicks. It was also the only variation with an exclamation mark.

These examples show how A/B testing little changes can lead to big wins. So, what’s the process?

The A/B Testing Process

- Collect data: First, you must analyze and gain insight into where the bottlenecks are – such as pages with low conversion rates or high drop off rates and other user metrics such as page views, time on site, impressions, clicks, CPCs, and many more. Here are four tools to help gain this insight:

- Google Analytics google.ca/analytics

- Mixpanel mixpanel.com

- KISSmetrics kissmetrics.com

- Omniture (Adobe) omniture.com

This information will help to set specific and measurable goals.

- Identify Goals: Figure out what metrics you will use to determine whether (or not) the changes get better results than the original version. Goals can be anything from website visit duration, email campaign signups, viewability of ads – the possibilities are vast and it is important to explore several possibilities before getting too focused as you risk missing the best possible solution. A helpful tip to start off: visit your website and jot down all the actions visitors can perform to create a list of possibilities.

While narrowing down to goals remember that less is more – keep focused and reduce choices so that you’ll get the most accurate results from testing. A straightforward goal example could be to simply optimizing engagement through a 20% increase in email list signups.

- Generate Hypothesis: Now that you know exactly what you want to improve, you can begin hypothesizing about what changes you think would make the biggest impact and then test. If your goal was to get more people to sign up for an email list or form – you could try something as simple as removing a few fields to make the process as painless as possible for the user to encourage more signups. We all know that attention span is short and that no one likes filling out lengthy forms (at least initially while to payoff is unclear). Or, what if your original call to action wasn’t as motivational as expected and your goal is to increase the response rate? For example, you want people to donate, so you might hypothesis that changing the wording from your current call-to-action “contribute” to “please donate” would be more engaging and direct.

- Generate Variations: Here’s where the A/B testing software comes in, which allows you to actually make the desired changes to your website or app and have the ability to see exactly what it will look like (especially helpful when changing colours or moving elements around) . A/B testing software resources include:

- Optimizely optimizely.com (free trail for 30 days, affordable and easiest to get started, real-time support, multi-functional)

- Visual Website Optimizer vwo.com (free trail for 30 days, feature rich, includes idea generation tools)

- Unbounce unbounce.com (focuses solely on landing pages, offers 80+ pre-designed landing page templates)

- KISSmetrics kissmetrics.com (premium, starting at $150 per month with full year commitment, offers advanced abilities, loads of data)

- Conduct Experiments: Let the testing begin! This is the part where visitors will be randomly sent to either the original version or the modified version to test which performs better. The way users interact with each version is monitored and compared to determine how the changes impacted the experience.

- Analyze Results and draw conclusions: You’re A/B testing tool will organize the data for you so you can see how your test version did compared to the original. Hopefully your test result support your hypothesis, but if not then there is still more hypothesising and experimenting fun to be had!

Conclusion

Every business, website, campaign, app, is different and A/B testing shows that common marketing wisdom often doesn’t apply. The advantages of improving conversion rates by A/B testing are well worth the relatively low investment of time and money.

See below for more surprising A/B testing discoveries that will make you want to test everything!

http://blog.granify.com/6-shocking-ab-test-wins-that-increase-conversion/

Want to learn more? Connect with our team at sales@clearpier.com